-

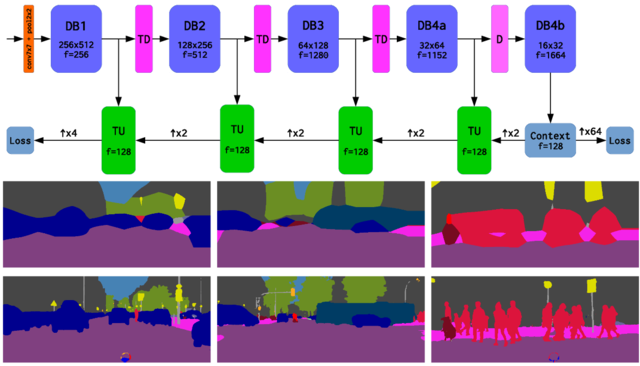

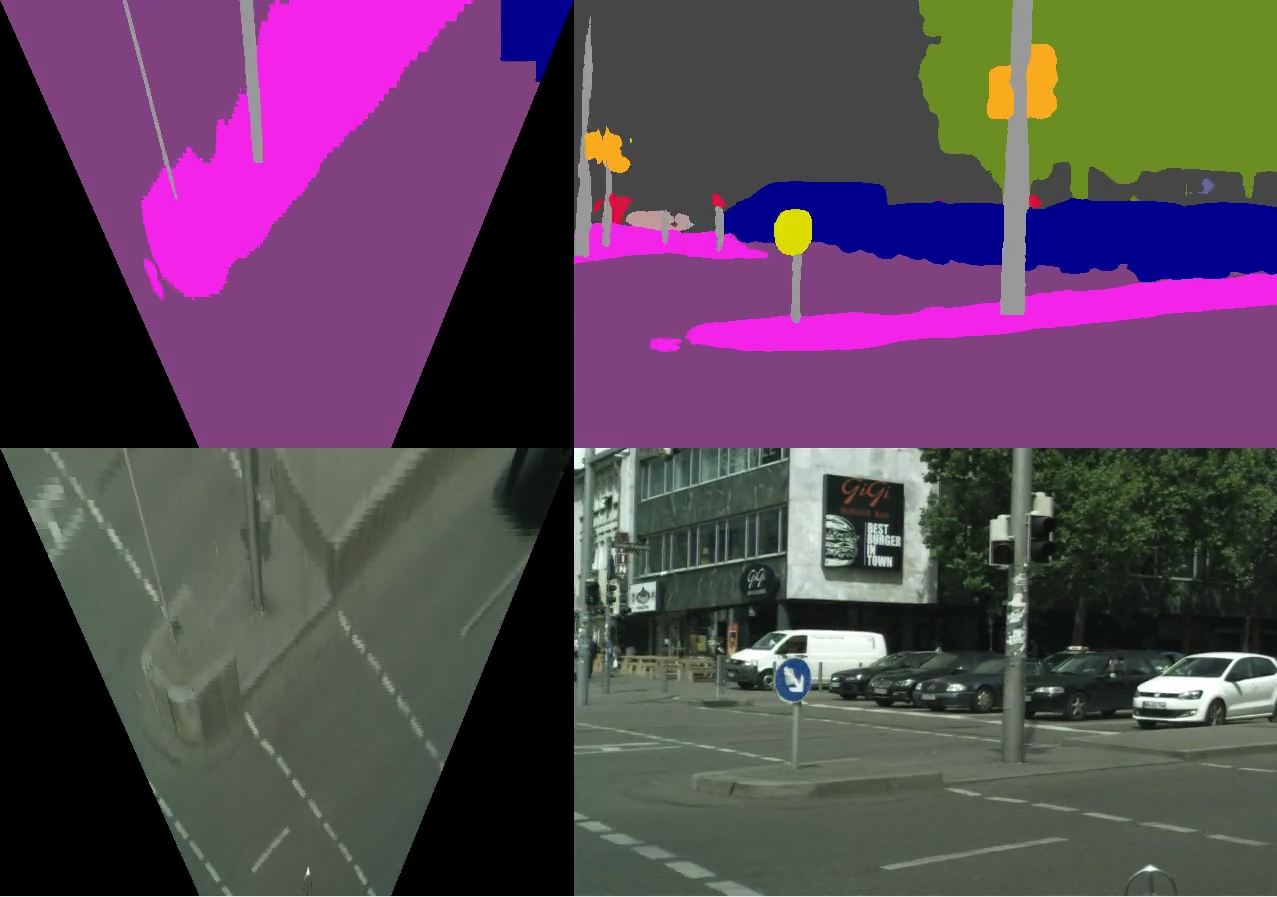

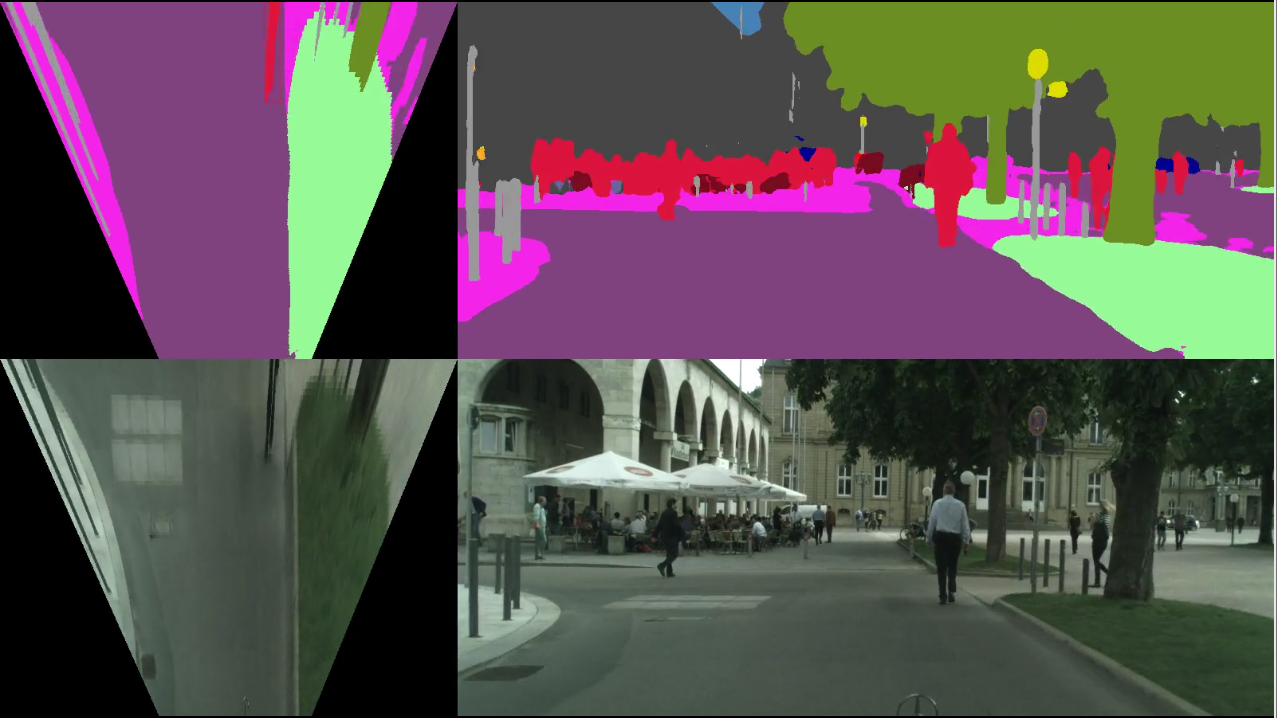

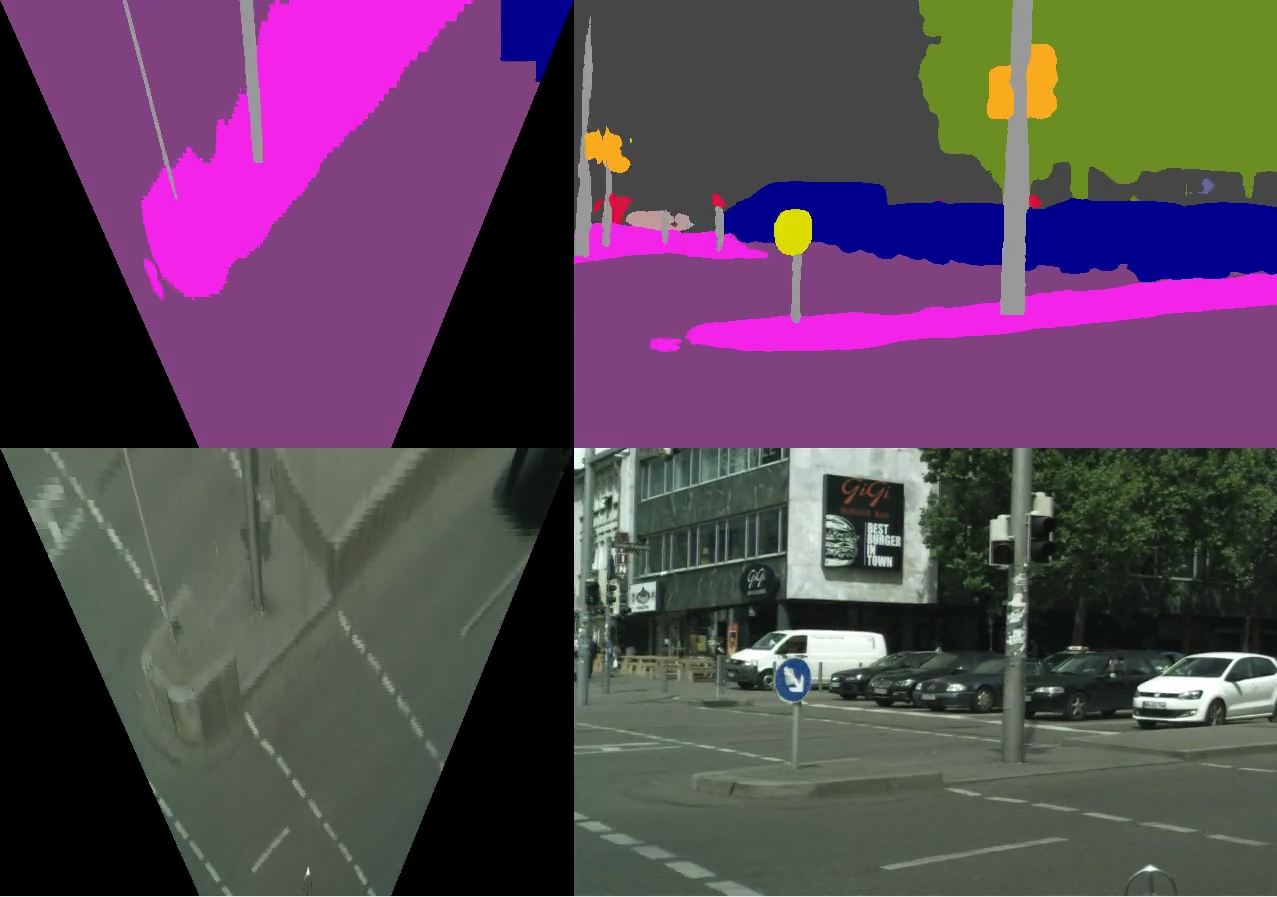

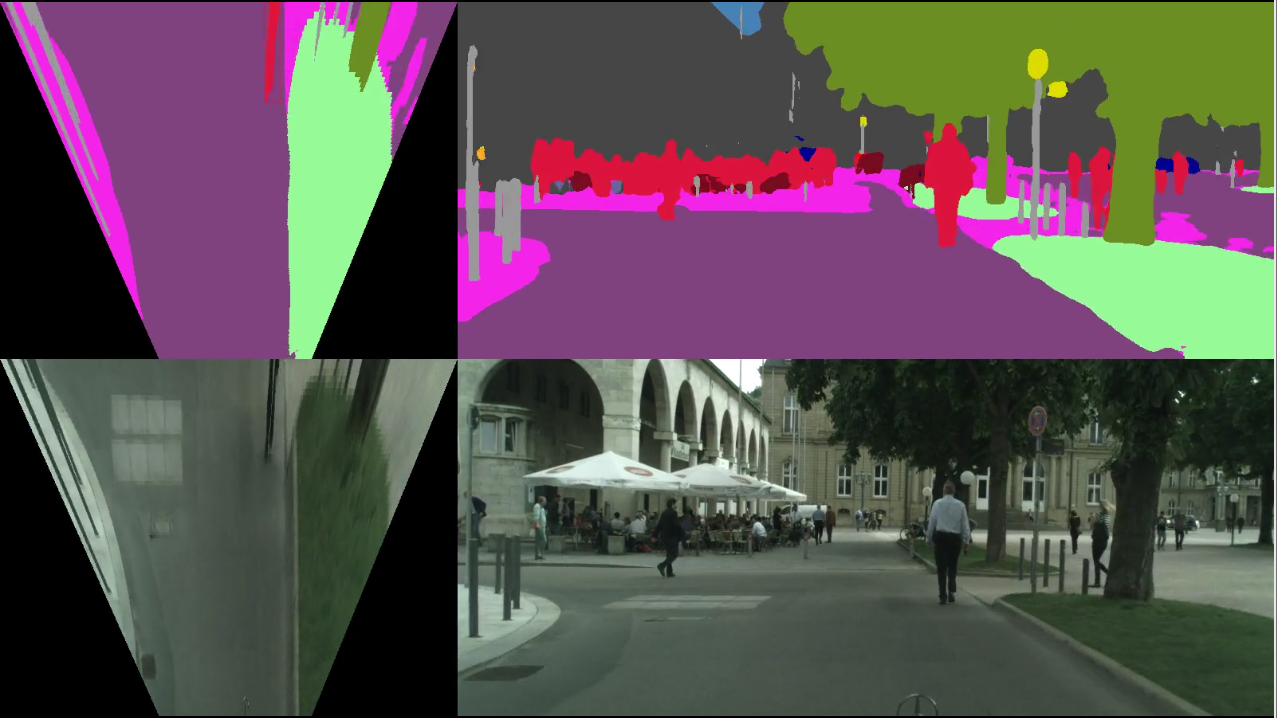

We apply the inverse perspective transform

to the semantic map recovered by

Ladder-DenseNet-121

(74.3 mIoU, 7.5Hz on Titan X).

-

the resulting bird-eye semantic view

(top-left subfigure in both figures on the right)

allows to detect drivable parts of the environment

-

the

technique

could support the following reasoning within

an autonomous driving system

-

the vehicle can navigate over

purple regions in the map (road)

-

exceptionally, the vehicle can

prudently drive over pink and light green

regions in the map (sidewalk, terrain)

-

all other colours represent various kinds of obstacles

(pedestrians, cars, etc.)

|

|